Delegation Interaction: The AI Collaboration Revolution from Tool-Using to Mentorship

Delegation Interaction: The AI Collaboration Revolution from Tool-Using to Mentorship

Introduction: When Your AI Assistant Has the Memory of a Goldfish

Imagine this scenario: every morning you walk into the office and face a brand-new assistant — someone who doesn’t remember what you discussed yesterday, doesn’t know your work habits, and isn’t even aware of your company’s basic business processes. You need to explain everything from scratch, every single day, over and over again.

This isn’t the plot of some absurd comedy. It’s the everyday reality of how most people interact with AI.

Over the past few years, AI technology has experienced explosive growth. From ChatGPT sweeping the globe to the proliferation of various large language models, people were thrilled to discover that AI could write articles, code programs, translate languages, and perform data analysis. However, once the novelty wore off, a deeper problem surfaced — our interaction model with AI is still fundamentally stuck at the level of “one-time tool usage.”

Every conversation is an island. The carefully crafted prompts, the painstakingly refined output styles, and the hard-won contextual understanding you built up — all of it vanishes the moment you close the chat window. Next time, you’re facing a blank slate again.

This interaction model has three fundamental pain points:

First, nothing accumulates. You spend thirty minutes teaching AI your writing style, but that thirty-minute investment never becomes a lasting asset. Tomorrow you’ll need another thirty minutes, and the day after that. Knowledge cannot accumulate, experience cannot be passed on — every interaction is a fresh expenditure starting from zero.

Second, you can’t truly delegate. You can ask AI to draft an email, but you can’t actually “delegate” real work to it — the kind that involves understanding context, making plans, executing steps, handling exceptions, and delivering results. Traditional AI can only do “you ask, I answer.” It’s a passive responder, not an active executor.

Third, you can’t trust it. Even when AI gives you an answer, it’s nearly impossible to trace its reasoning process. Why did it make this decision? What steps did it go through? Did it miss any critical information? This opacity makes it impossible to confidently hand over important work.

These three pain points all point to the same fundamental issue: traditional AI interaction defines the relationship between humans and AI as “user and tool,” and tools have no memory, no growth, and no initiative.

So is there a fundamentally new paradigm that could transform this relationship entirely?

The answer is yes. It’s the Delegation Interaction model, proposed and implemented by DesireCore.

Part One: What Is Delegation Interaction?

1.1 Redefining the Human-AI Relationship

The core philosophy of delegation interaction can be summarized in one sentence: Redefine the relationship between humans and AI from “user and tool” to “mentor and apprentice.”

This isn’t just a metaphor — it’s a complete interaction paradigm. In this paradigm, AI is no longer a passive tool waiting for commands but a “digital apprentice” that can be taught, can accept tasks, can execute independently, and can deliver results.

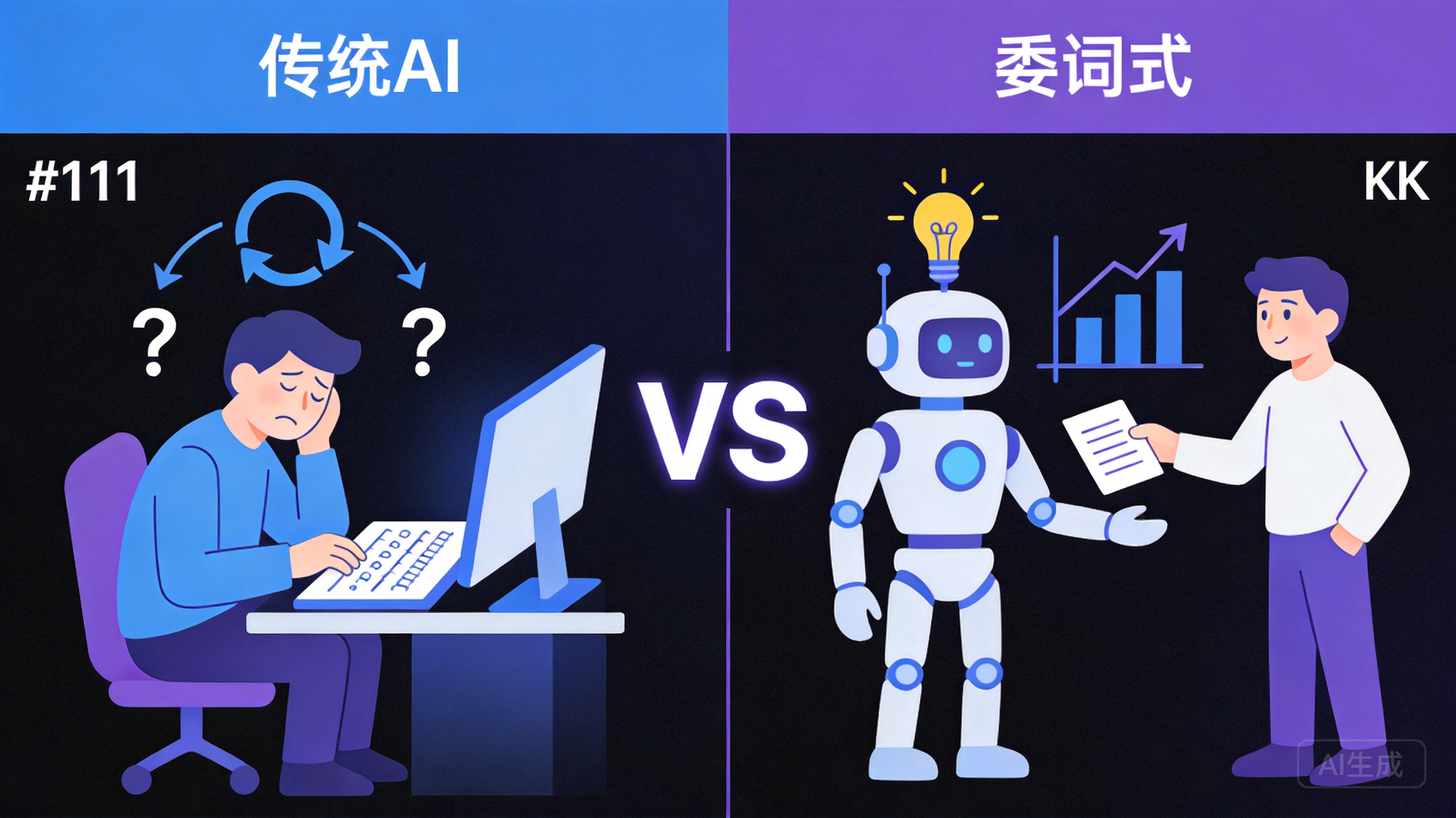

Let’s understand the profound significance of this transformation through a comparison.

When you use traditional AI tools, your behavioral pattern looks like this:

- Open the tool

- Input a question or command

- Wait for a response

- Manually use the response

- Close the tool

This is a classic Command-Response pattern. The role AI plays here is essentially no different from a calculator — you input a formula, it outputs a result. The only difference is that the “formulas” it can handle are more complex and diverse.

In delegation interaction, your collaboration model with AI transforms into this:

- Teach it rules and preferences

- Demonstrate the correct approach

- Let it try handling tasks independently

- Review results and provide feedback

- Gradually let go and let it take on more

This is a Teach-Grow-Delegate pattern. The role AI plays here is that of an apprentice — it has the capacity to learn, the ability to remember, the initiative to execute, and the flexibility to accept correction.

1.2 Paradigm Shifts Across Five Dimensions

To understand the differences between delegation interaction and traditional AI interaction more clearly, let’s compare them across five core dimensions:

Dimension One: The Nature of the Relationship

Traditional AI operates on a “user and tool” relationship. The user issues commands; the tool passively responds. The tool never proactively does anything, nor does it adapt to the user’s habits in any way.

Delegation interaction operates on a “mentor and apprentice” relationship. The mentor teaches the apprentice, and the apprentice learns and grows. Over time, the apprentice understands the mentor’s needs better, can handle more work, and requires less guidance.

Dimension Two: Memory

Traditional AI starts from scratch with every conversation. All the context, preferences, and conventions accumulated in the previous conversation are not retained. Every interaction is a one-time expenditure.

In delegation interaction, the agent retains what it learns. The rules you teach it, the examples you demonstrate, the adjustments it makes from feedback — all persist. What you teach today doesn’t need to be taught again tomorrow.

Dimension Three: Capability Boundaries

Traditional AI can only answer questions. It’s a response machine whose capability stops at “providing a reply.” How that reply gets used and what follow-up actions are needed fall outside its scope of responsibility.

In delegation interaction, the agent can accept tasks, formulate plans, execute steps, and deliver results. It’s a complete task-processing unit with end-to-end capabilities from understanding to delivery.

Dimension Four: Growth Trajectory

Traditional AI has no growth to speak of. Today’s version is identical to tomorrow’s; the first conversation and the hundredth perform exactly the same (given identical inputs).

In delegation interaction, the agent becomes more reliable with increased usage. It accumulates knowledge from every teaching session, corrects behavior from every piece of feedback, and gains experience from every task. This is a genuine learning curve.

Dimension Five: Transparency and Control

Traditional AI’s decision-making process is opaque. You can only see the final output, unable to understand the intermediate process. If the result is unsatisfactory, your only option is to re-ask the question.

Delegation interaction provides fully traceable transparency throughout the entire process. Every operation is recorded, every decision has a rationale. You can pause, correct, or roll back at any time. You maintain complete control over the AI’s behavior.

1.3 Why “Mentorship” Is the Best Metaphor

We chose “mentor and apprentice” rather than “manager and employee” or “teacher and student” to describe the delegation interaction relationship model after careful deliberation.

The mentorship relationship has several unique qualities that perfectly align with the design philosophy of delegation interaction:

First, knowledge transfer is gradual. A mentor doesn’t dump all knowledge onto the apprentice at once but teaches progressively based on the apprentice’s current skill level. Start with fundamentals, then advanced techniques, and finally high-level mastery. Delegation interaction works the same way — you first teach the agent basic rules, then deepen understanding through examples, and finally gradually let it handle complex tasks independently.

Second, practice is at the heart of learning. The defining feature of apprenticeship is “learning by doing.” The apprentice isn’t memorizing theory in a classroom but getting hands-on experience in real work scenarios. Made a mistake? The mentor corrects it. Did it right? The mentor confirms. Delegation interaction adopts the same philosophy — the agent doesn’t understand your needs in the abstract but continuously calibrates through actual task execution.

Third, trust is built incrementally. No mentor hands over their most important work to an apprentice on day one. Trust is accumulated through successive successful deliveries. Delegation interaction follows the same logic — the agent starts with simple tasks, and as its performance stability improves, it’s gradually delegated more complex and critical work.

Fourth, the ultimate goal is independence. The end goal of mentorship isn’t to hand-hold forever but to enable the apprentice to eventually work independently. Delegation interaction shares this vision — through continuous teaching and feedback, the agent ultimately handles work with high autonomy, only reaching out when confirmation is needed.

Part Two: A Detailed Look at the Six Primitives Framework

Delegation interaction isn’t an abstract concept — it’s an actionable methodology. At the core of this methodology is the Six Primitives Framework — Teach, Demonstrate, Query, Establish, Execute, and Review.

These six primitives cover the complete lifecycle of human-agent interaction, from knowledge transfer to task execution, from feedback confirmation to result review. Each primitive has a clear definition, applicable scenarios, and best practices.

2.1 Teach: Conveying Rules Through Natural Language

Definition

“Teach” is the first primitive in the Six Primitives Framework and the starting point of the entire delegation interaction. Its essence is: convey rules, preferences, and constraints to the agent through natural language.

No coding required, no parameter configuration needed, no special syntax to learn. You simply express your requirements the way you naturally would, and the agent understands and internalizes them as behavioral guidelines.

Use Cases

The “Teach” primitive is applicable in the following scenarios:

- Establishing fundamental rules: Tell the agent about your work methods, preferences, and red lines. For example: “When replying to customer emails, the tone should be professional but not stiff, and the opening must address the person by name.”

- Defining business logic: Communicate your business rules to the agent. For example: “Orders exceeding $5,000 must be confirmed by me before processing.”

- Setting output standards: Specify the format and standards for the agent’s output. For example: “The weekly report format is: completed items first, then next week’s plan, then items requiring coordination. Keep each item to three lines or fewer.”

- Communicating priorities: Help the agent understand what matters more. For example: “Response speed takes priority over perfection — if you can reply within two hours, don’t wait until the next day.”

Concrete Example

Suppose you’re the operations lead for an e-commerce team. You might “teach” your agent like this:

“When handling customer complaints, follow these rules:

- First, express apology and understanding

- Confirm the specific details of the issue

- Provide a solution — prioritize returns/exchanges, followed by compensation coupons

- Refunds exceeding $500 need special flagging

- Maintain a warm, professional tone throughout — never argue with customers”

Once taught, the agent will automatically follow these rules when handling related tasks, without you needing to repeat them each time.

Rule Hierarchy

In practice, rules aren’t a flat list but a hierarchical structure:

- Core rules: Inviolable bottom lines such as compliance requirements, safety guidelines, and privacy protection

- Business rules: Process and standards related to specific business operations

- Preference rules: Non-mandatory style and habit preferences

- Temporary rules: Temporary agreements for specific periods or projects

The agent understands these hierarchies and makes priority-based judgments when rules conflict. For example, when your preference rule (“keep replies concise”) conflicts with a business rule (“refund explanations must include full terms”), the agent will prioritize the business rule.

Characteristics of Quality Rules

A good rule should possess the following characteristics:

- Clear: Avoid vague language. “Handle it well” is less effective than “processing time should not exceed 24 hours, and replies should be at least three lines.”

- Actionable: Rules should be behavioral guidelines the agent can directly follow, not abstract philosophies. “Show empathy” is less effective than “begin the reply by restating the customer’s issue and expressing that you understand their frustration.”

- Bounded: Rules should clearly define their scope of application. “All emails should be formal” may not reflect your true intent — internal emails to colleagues might benefit from a more casual style.

- Verifiable: Good rules should be checkable. “Have a good attitude” is hard to verify, but “don’t use negative sentence structures or rhetorical questions” is verifiable.

Common Pitfalls

There are several common mistakes to avoid when using the “Teach” primitive:

- Too many rules: Overwhelming the agent with too many rules at once can make its behavior rigid and contradictory. Start with the most critical 3-5 rules and gradually add more as collaboration deepens.

- Contradictory rules: Having “keep replies concise” and “keep replies comprehensive” simultaneously will leave the agent paralyzed. Ensure consistency between rules.

- Overly absolute rules: Rules like “never use any abbreviations whatsoever” might cause issues in certain contexts. Consider adding exception conditions.

- Neglecting updates: Business rules change, but people often forget to update the agent’s rule base. Regularly reviewing and updating rules is key to maintaining agent effectiveness.

2.2 Demonstrate: Providing Concrete Examples and Counterexamples

Definition

“Demonstrate” is the second primitive in the framework. If “Teach” tells the agent “what to do,” then “Demonstrate” shows the agent “what the result should look like.”

Human learning relies heavily on imitation. We watch how someone else does something, then try to replicate it ourselves. Agent learning works the same way — rules provide direction, while examples let it grasp the specific standards and nuances.

Use Cases

The “Demonstrate” primitive is particularly effective in the following scenarios:

- When rules are hard to precisely describe: Some standards are difficult to fully capture with rules, but a few examples can help the agent “get the feel.” For example, your brand’s copywriting style might be hard to articulate in a few rules, but showing five excellent copy examples enables the agent to capture that essence.

- When there are many edge cases: Rules handle “normal situations,” but reality always has exceptions. By providing examples that include edge cases, the agent learns how to make judgments in gray areas.

- When precise output format alignment is needed: Rather than verbally describing format specifications, simply provide a perfect output sample. What you see is what you get.

- When you need to show “what not to do”: Counterexamples (negative examples) are often more educational than positive ones. Showing the agent what both “good replies” and “bad replies” look like dramatically improves its judgment.

Positive and Negative Examples

The key technique in example-based teaching is providing both positive and negative examples simultaneously. This isn’t an optional best practice — it’s virtually a requirement.

Positive examples showcase the ideal state — “this is what I want.” They help the agent understand the target standard.

Negative examples showcase the state to avoid — “this is what I don’t want.” They help the agent understand prohibited areas and bottom lines.

Let’s look at a concrete example. Suppose you’re teaching the agent to write product descriptions:

Positive example:

“This wireless noise-canceling headphone features adaptive noise cancellation technology that automatically adjusts noise reduction levels based on ambient sound. With 40-hour ultra-long battery life, you can forget about charging anxiety. Its ergonomic design ensures comfort during extended wear. Perfect for commuting, office work, and travel.”

Negative example:

“This is a really great pair of headphones with amazing sound quality. They’re super comfortable and the battery lasts forever. If you love listening to music, these headphones are absolutely the best choice! Highly recommend buying them!”

Through this pair of positive and negative examples, the agent intuitively learns that product descriptions should focus on specific features and data rather than piling on subjective evaluations; should explain use cases rather than making generic recommendations; and should use restrained, professional language rather than excessive enthusiasm.

Quantity and Diversity of Examples

When providing examples, balance quantity and diversity:

- Too few examples (1-2) may cause the agent to overfit to the specific details of these particular samples rather than learning the general pattern.

- Too many examples (more than 10) may confuse the agent with differences between examples, preventing it from distilling core patterns.

- Best practice is to provide 3-5 positive examples and 2-3 negative examples, ensuring sufficient diversity among examples to cover different typical scenarios.

2.3 Query: Asking Questions and Getting Feedback

Definition

“Query” is the third primitive in the framework. It implements a bidirectional feedback channel — not only can the user ask questions of the agent, but the agent can also proactively ask the user questions to confirm understanding.

This is an important feature that distinguishes delegation interaction from traditional AI interaction. Traditional AI always tries its best to give an answer, even when it’s uncertain about its understanding. In delegation interaction, the agent is designed to proactively ask when uncertain, rather than guessing on its own.

Use Cases

The “Query” primitive functions in the following scenarios:

- Rule comprehension confirmation: After you teach the agent a new rule, it may ask questions to confirm its understanding is correct. “You mentioned ‘formal occasions’ — does that mean client meetings and external reports? Does an internal weekly meeting count?”

- Edge case consultation: When the agent encounters a situation not covered by its rules, it proactively asks for guidance. “This customer’s complaint involves a product defect. Per the rules, I should prioritize returns/exchanges, but the customer specifically wants a cash refund. How should I proceed?”

- Task clarification: When you delegate a task, the agent confirms its understanding. “Should I compile the data from last Monday through Friday, or including the weekend? Should the report format follow last time’s template?”

- User-initiated queries: You can also ask the agent questions at any time to assess its current knowledge state. “What’s your current understanding of our refund process? Walk me through the rules.”

The Value of Good Questions

In traditional AI interaction, we’re accustomed to AI always “having an answer for everything.” But in delegation interaction, a good “I’m not sure” is worth far more than a wrong “I know.”

The agent’s proactive questioning behavior reflects an important design principle: It’s better to ask one more question than to make one wrong move. This perfectly mirrors the behavior of excellent apprentices in real life — a good apprentice doesn’t take matters into their own hands when uncertain but first confirms with the mentor.

The benefits of this mechanism are clear:

- Fewer errors: By confirming understanding before execution, errors caused by misunderstanding are drastically reduced.

- Faster calibration: Every question-and-answer exchange is an opportunity for fine-tuning, helping the agent align more quickly with your true needs.

- Building trust: When you discover that the agent asks you when uncertain rather than blindly executing, your trust in it increases significantly.

2.4 Establish: Confirming Learning Outcomes

Definition

“Establish” is the fourth primitive in the framework. Its function is mutual confirmation of learning outcomes — ensuring that what the agent has learned is correct and that the mentor knows the apprentice’s current capability level.

If “Teach” is the input, “Demonstrate” is supplementary, and “Query” is calibration, then “Establish” is the lock-in — formally confirming verified knowledge and capabilities.

Use Cases

The “Establish” primitive functions in the following scenarios:

- Milestone confirmation: After completing a round of teaching, confirming that the agent has correctly grasped the relevant knowledge. “Alright, walk me through your understanding of the customer refund process.”

- Capability upgrade confirmation: When the agent demonstrates ability to handle more complex tasks, formally acknowledging this capability upgrade. “You’ve been very stable handling basic complaints. Next, I’ll start teaching you how to handle complex complaints involving legal clauses.”

- Rule change confirmation: When business rules change, confirming that the agent has updated its relevant knowledge. “The new refund policy is now in effect. Please confirm you’ve updated your processing rules according to the new policy.”

- Consensus building: After reaching consensus through discussion on ambiguous areas, formally recording the consensus. “Let’s settle this — for VIP customers, even if they exceed the normal return/exchange period, we can handle it flexibly.”

The Difference Between “Establish” and “Teach”

“Teach” is one-way knowledge transfer — the mentor speaks, the apprentice listens. “Establish” is a bidirectional confirmation mechanism — confirming not only that knowledge was received but that it was correctly understood.

A good “Establish” process typically includes the following steps:

- The agent restates the rules or capabilities it understands

- The user reviews whether the restatement is accurate

- If there are deviations, corrections are made (returning to “Teach” or “Demonstrate”)

- Once confirmed accurate, the knowledge point is formally “established”

This process ensures teaching effectiveness — it’s not enough for the mentor to have said it; the apprentice must have understood it.

2.5 Execute: Carrying Out Tasks

Definition

“Execute” is the fifth primitive and the core value proposition of delegation interaction. The preceding four primitives (Teach, Demonstrate, Query, Establish) all prepare for “Execute” — the ultimate purpose is to enable the agent to independently execute real tasks and deliver results.

Use Cases

The “Execute” primitive covers all scenarios requiring actual agent execution:

- Daily task processing: Such as organizing data, writing reports, replying to emails, updating documents, etc.

- Process-driven work: Such as processing approvals according to established procedures, executing checklists, conducting regular inspections, etc.

- Analytical tasks: Such as market data analysis, competitor comparisons, trend forecasting, etc.

- Creative work: Such as writing marketing copy, designing event plans, conceiving content strategies, etc.

The Complete Execution Flow

Unlike traditional AI that directly provides answers, task execution in delegation interaction follows a rigorous process:

- Receive the task: The agent receives the task you’ve delegated

- Confirm understanding: The agent confirms its understanding of the task, including objectives, scope, constraints, and expected deliverables

- Formulate a plan: The agent creates an execution plan, including step breakdown, resource requirements, and time estimates

- Plan approval: The plan is submitted for your confirmation, and execution begins only after approval. This is an important safety mechanism in delegation interaction — the agent won’t act without your knowledge

- Step-by-step execution: Execute according to the approved plan

- Exception handling: When encountering unexpected situations during execution, pause and consult you

- Deliver results: After completing all steps, deliver results accompanied by an execution receipt

Levels of Task Delegation

Based on the agent’s maturity and task complexity, delegation can be divided into different levels:

- Fully guided: Every step requires your confirmation before proceeding. Suitable for the stage when the agent is just beginning to learn a new type of task.

- Key checkpoint confirmation: The agent can autonomously execute most steps but requires your confirmation at key decision points. Suitable for when the agent has some foundation but isn’t yet mature.

- Result review: The agent executes fully autonomously and submits results for your review upon completion. Suitable for when the agent has proven very reliable with this type of task.

- Highly autonomous: The agent executes autonomously and delivers directly, notifying you only in exceptional circumstances. Suitable for mature stages of established trust.

2.6 Review: Examining and Adjusting

Definition

“Review” is the final primitive in the framework and a critical link in the complete loop. Its function is to examine execution results and make necessary adjustments based on review findings.

“Review” isn’t just about checking whether results are correct — more importantly, it’s about extracting lessons from execution, identifying areas for improvement, and updating the knowledge base.

Use Cases

The “Review” primitive functions in the following scenarios:

- Deliverable review: Checking whether the agent’s deliverables meet expected standards

- Process retrospective: Reviewing the execution process to identify opportunities for process optimization

- Error analysis: When results are unsatisfactory, analyzing causes and making targeted adjustments

- Rule iteration: Updating or supplementing the rule system based on actual execution experience

- Capability assessment: Evaluating the agent’s capability level for specific task types to determine whether to increase the delegation level

The Loop Created by “Review”

The existence of the “Review” primitive creates a complete learning cycle within the Six Primitives Framework:

Teach → Demonstrate → Query → Establish → Execute → Review → (return to Teach/Demonstrate for adjustments)

This cycle means the agent’s capabilities aren’t static but dynamically evolving. Each “Review” can trigger new rounds of “Teach” or “Demonstrate,” continuously elevating the agent’s capability level.

Review Methodology

Effective review should focus on the following aspects:

- Result quality: Did the deliverables meet expected standards? What exceeded expectations? What fell short?

- Process efficiency: Was the execution process smooth? Were there unnecessary steps? Were steps missing?

- Rule effectiveness: Were existing rules sufficient to guide execution? Are new rules needed? Do existing rules need modification?

- Exception handling: How were exceptions encountered during execution handled? Was the handling approach reasonable? Should new exception-handling rules be established?

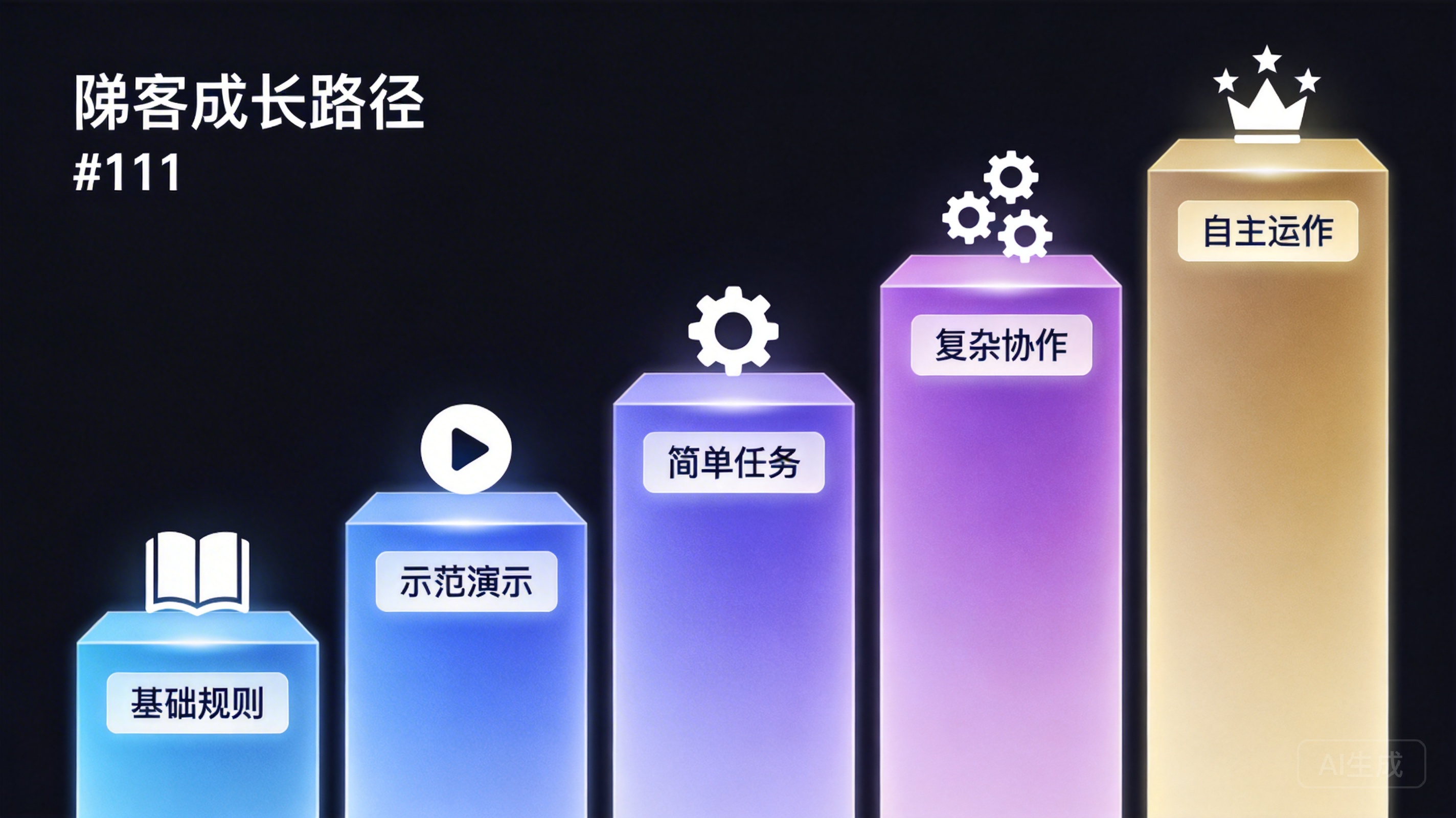

Part Three: From Teaching to Doing — The Agent’s Learning Growth Path

With the Six Primitives Framework understood, a natural question arises: How do you systematically apply these primitives to guide an agent from scratch, gradually developing it into a reliable work partner?

DesireCore has designed a clear learning growth path divided into five stages.

3.1 Stage One: Foundational Rule Teaching

This is where everything begins. In this stage, your primary work is using the “Teach” primitive to convey fundamental rules to the agent.

Core Tasks:

- Establish identity awareness: Help the agent understand its role and scope of responsibilities

- Set baseline standards: Such as output format, language style, response time, etc.

- Communicate core rules: The most important 5-10 business rules

- Define boundaries: Clearly establish what is absolutely off-limits

Key Principles for This Stage:

- Start small, then expand: Don’t try to teach the agent all rules at once. Begin with the most critical rules, ensure each one is correctly understood, then add new ones.

- Start simple, then complicate: Teach simple, clear rules first, then gradually introduce complex rules with conditions and exceptions.

- Confirm promptly: After teaching each group of rules (recommended 3-5 per group), use the “Establish” primitive to confirm that the agent’s understanding aligns with your intent.

Typical Interaction Example:

User (Teach): “You are our customer service team’s AI assistant. Your primary responsibility is handling customer emails. When replying, use a formal but friendly tone, address the person by name at the beginning, and sign off with ‘XX Company Customer Service Team.’”

Agent (Establish): “I understand. My role is a customer service AI assistant, primarily handling customer emails. Replies should be formal but friendly, address the customer by name at the beginning, and sign off with ‘XX Company Customer Service Team.’ Please confirm whether this understanding is correct?”

User (Establish): “Correct.”

3.2 Stage Two: Demonstration

After establishing foundational rules, enter the demonstration stage. This stage primarily uses the “Demonstrate” primitive to deepen the agent’s understanding of rules through concrete examples.

Core Tasks:

- Provide positive and negative examples for key rules

- Show how rules apply concretely in different scenarios

- Specifically demonstrate handling of edge cases and exceptions

- Showcase your personal preferences and style

Key Principles for This Stage:

- Pair positives with negatives: Every key knowledge point should have both positive and negative examples, helping the agent understand both ends of the standard.

- Vary scenarios: Examples should cover multiple scenarios to prevent the agent from only learning how to handle one specific situation.

- Verify progressively: After presenting each set of examples, have the agent attempt a similar case to test whether it has truly learned.

Typical Interaction Example:

User (Demonstrate): “Here’s an example of a good refund reply: ‘Dear Mr. Zhang, we sincerely apologize for the inconvenience. I’ve confirmed that your order from April 5th had a damaged product issue. We will process a full refund to your original payment account within 3 business days. You’ll receive an SMS notification once the refund arrives. If you have any other questions, please don’t hesitate to contact us. XX Company Customer Service Team’”

User (Demonstrate): “Here’s an example of a poor refund reply: ‘Hello, we’ve received your refund request and will process it as soon as possible.’ — This reply lacks specific information, doesn’t express apology, and provides no clear timeline.”

Agent (Query): “I notice the good example includes the specific order date, problem description, processing timeline, and follow-up notification method. Should all refund replies include these four elements?”

User (Establish): “Yes, these four elements are required components of refund replies.”

3.3 Stage Three: Independent Handling of Simple Tasks

After the first two stages of teaching and demonstration, the agent has built the knowledge foundation for handling basic tasks. Now it’s time to let it “dive in.”

Core Tasks:

- Assign the agent simple, low-risk real tasks

- Conduct detailed review and feedback after each task completion

- Provide targeted supplementary teaching based on review results

- Gradually reduce intervention frequency in the execution process

Key Principles for This Stage:

- Small steps, quick iterations: Delegate only one simple task at a time, complete it quickly, and give quick feedback. Don’t start with complex, large-scale tasks.

- Tolerance for errors: The purpose of this stage is learning, not output. Be patient with minor execution errors and focus on whether errors fall within expected ranges.

- Positive reinforcement: When the agent does something right, explicitly tell it “this was done well because…” Positive feedback is just as important as negative feedback.

- Pattern recognition: Watch for whether the agent repeatedly makes the same type of error. If so, the corresponding rules or examples need strengthening.

Typical Interaction Example:

User (Execute): “Help me reply to this customer email. Customer Ms. Li reports that her purchased Bluetooth speaker has static noise issues and wants to return it.”

Agent (Execute): Submits execution plan and draft reply

User (Review): “The overall structure of the reply is good, but there’s one issue — you forgot to mention our 7-day no-questions-asked return policy. This information is important to customers and builds trust. Please revise and resubmit.”

Agent (Execute): Revises and resubmits

User (Review): “This version is good. Remember, mentioning the return policy is standard for all return-related replies.”

3.4 Stage Four: Assisting with Complex Work

Once the agent’s performance on simple tasks is stable and reliable, begin delegating more complex work.

Core Tasks:

- Gradually increase task complexity and scope

- Allow the agent to autonomously execute longer task chains after key checkpoint confirmations

- Begin involving work that requires judgment, not just execution

- Develop the agent’s exception-handling capabilities

How Complex Work Differs from Simple Tasks:

Complex work typically has the following characteristics:

- Multi-step: Requires completing multiple steps in sequence, with dependencies between them

- Multi-conditional: Requires different handling approaches based on different situations

- Requires judgment: Some decisions can’t be fully covered by rules, requiring the agent to apply existing knowledge

- Consequential: Results may significantly impact the business, with less room for error

Key Principles for This Stage:

- Gradual release: Don’t grant full autonomy in one leap — progressively transition from “fully guided” to “key checkpoint confirmation.”

- Emphasize planning: Execution plan approval is particularly important for complex tasks. Investing more time in the planning phase avoids extensive rework during execution.

- Establish emergency protocols: Agree on clear “stop” conditions with the agent — under what circumstances must it pause execution and consult you.

- Regular reviews: Conduct comprehensive capability assessments periodically, not just single-task reviews.

3.5 Stage Five: Highly Autonomous Operation

This is the mature stage of delegation interaction. After accumulating through the previous four stages, the agent has developed deep understanding of your business rules, preferences, and work methods, and can operate with high autonomy under minimal human intervention.

Characteristics of This Stage:

- The agent can independently handle most daily tasks, only requiring your intervention in exceptional circumstances

- The agent can proactively identify problems and opportunities, offering suggestions

- The agent’s judgment has been thoroughly validated, allowing autonomous decision-making within defined boundaries

- Your role shifts from “executor” to “reviewer” and “strategy setter”

Important Notes:

High autonomy doesn’t mean zero oversight. Even at this stage, the following activities remain necessary:

- Periodic spot checks: Randomly review the agent’s execution results to ensure quality hasn’t degraded

- Rule updates: Promptly update the rule base as the business environment changes

- Capability expansion: Continuously teach new knowledge and skills to expand the agent’s capability boundaries

- Exception retrospectives: Conduct deep retrospectives on every exceptional situation to extract lessons learned

Part Four: The Art of Teaching — Crafting Rules, Using Examples, and Handling Feedback

The Six Primitives Framework and the five-stage growth path provide the framework, but the framework’s effectiveness depends on execution quality. This section dives deep into the “technique” and “art” of teaching.

4.1 Methodology for Rule Teaching

Rule Structure

A complete rule should contain the following elements:

- Trigger condition (When): Under what circumstances does this rule apply?

- Behavioral requirement (What): What specifically needs to be done or avoided?

- Priority (Priority): When this rule conflicts with other rules, which takes precedence?

- Exception conditions (Exception): Are there special circumstances where this rule doesn’t apply?

For example, a well-written rule might look like this:

“When a customer email mentions legal proceedings or lawyers (trigger condition), immediately flag that email as ‘legal risk’ and forward it to me for handling — do not reply on your own (behavioral requirement). This rule takes priority over all other customer email handling rules (priority). Even if the customer is just mentioning it casually rather than making a formal threat, handle it this way (no exceptions).”

Rule Granularity

Rules can’t be too broad or too narrow. Overly broad rules lack guidance; overly narrow rules lack flexibility.

Too broad: “Handle customer emails carefully.” — This rule says nothing. What does “carefully” mean? The agent can’t extract any actionable guidance from it.

Too narrow: “When replying to customer emails, the first line should be ‘Dear Mr./Ms. XX,’ the second line should be blank, the third line should say ‘Thank you for your message,’ the fourth line should say ‘Regarding the issue you mentioned…’” — This rule is too rigid and will quickly encounter situations where it doesn’t apply.

Just right: “When replying to customer emails, address the person by name at the beginning and express gratitude or apology (depending on the situation), then get straight to the heart of the issue and provide a specific solution or next steps. Keep the overall length between 150-300 words.” — This rule has clear behavioral guidance while retaining sufficient flexibility.

Rule Update Strategy

Rules aren’t write-once-and-done. The following situations require rule updates:

- Business changes: When company policies, product features, service standards, etc., change

- Gap discovery: When the agent encounters situations not covered by existing rules during execution

- Poor effectiveness: When a rule’s actual execution results don’t match expectations

- Over-constraint: When a rule proves too strict in practice, limiting the agent’s normal work

When updating rules, adopt a “version management” mindset — rather than directly modifying old rules, explicitly tell the agent “the old rule is now deprecated; the new rule is as follows.” This avoids behavioral anomalies caused by mixing old and new rules.

4.2 Best Practices for Example-Based Learning

Principles for Selecting Examples

-

Representativeness: Selected examples should represent typical scenarios, not extreme or rare cases. Extreme cases can serve as supplements but shouldn’t be the primary learning material.

-

Contrast: The contrast between positive and negative examples should be as stark as possible, enabling the agent to immediately see the differences. If the differences between two examples are too subtle, the agent may not accurately extract the key distinctions.

-

Progressiveness: Example difficulty should be progressive. Start by showing the most standard, typical cases, then gradually introduce more complex, special situations.

-

Authenticity: Whenever possible, use real business cases as examples rather than fabricated idealized scenarios. Real cases contain details and complexity closer to what the agent will actually face.

How to Present Examples

When presenting examples, use the following structure:

- Scenario description: Briefly describe the background of this example

- Input: The information the agent would receive

- Expected output: The response you want the agent to produce

- Key point annotations: Highlight the most important aspects of this example, helping the agent understand what you focus on

For example:

Scenario: A customer purchased a high-value item, discovered minor defects upon receipt, and is emotionally agitated.

Customer email: “You charge this much and the quality is like this? I spent over $3,000 on a defective product! So disappointing! I want to return it for a full refund!”

Expected reply: “Dear Mr. Wang, first, we sincerely apologize for this extremely unpleasant experience. We completely understand your disappointment and frustration — spending over $3,000 only to receive a product with defects is unacceptable. I’ve initiated the return and refund process for you. We will arrange a courier to pick up the item from your location, and the refund will be returned via the original payment method within 2 business days after we receive the return. We’ll call ahead to confirm the pickup time. Once again, our deepest apologies for this poor experience. XX Company Customer Service Team”

Key points: (1) Empathize before solving — restate the customer’s emotions and the amount spent, expressing complete understanding (2) Don’t justify or deflect blame (3) Provide a clear solution and timeline (4) Proactively offer home pickup to minimize customer inconvenience (5) Double apology — one at the beginning and one at the end

4.3 Techniques for Handling Feedback

Feedback is the most easily overlooked yet most impactful element in delegation interaction. Good feedback accelerates the agent’s growth, while poor feedback can cause confusion.

Principles of Effective Feedback

- Specific: “This isn’t good” is less effective than “The wording here is too aggressive — use ‘suggest’ instead of ‘must.’”

- Timely: Provide feedback immediately upon discovering issues. Don’t save everything for the end. The agent needs to associate feedback with specific execution behaviors.

- Constructive: Don’t just point out problems — also provide direction for improvement. “Don’t do this” is less effective than “Don’t do this; instead, do this.”

- Consistent: Feedback standards should remain consistent. Don’t think a certain style is good today and bad tomorrow — this confuses the agent.

- Balanced: Point out problems but also acknowledge what was done well. All criticism will make the agent overly conservative.

The Undo Mechanism

An important safety net in delegation interaction is the undo mechanism. When you discover that a previously taught rule is inappropriate, or that a previous confirmation was incorrect, you can undo it at any time.

Undoing isn’t simple “deletion” — it’s a conscious “correction” process:

- Explicitly tell the agent which rule or confirmation needs to be undone

- Explain the reason for undoing (helping the agent understand why things changed)

- If there’s a replacement rule, communicate the new rule simultaneously

- Confirm the agent has correctly executed the undo

This mechanism ensures flexibility in the teaching process — you don’t need to have everything figured out from the start. Making mistakes is part of learning, not only for the apprentice but for the mentor as well.

4.4 Common Teaching Challenges and Solutions

Challenge One: Rule Conflicts

As the number of rules increases, so does the probability of conflicts. Solutions:

- Establish clear rule hierarchies (Core > Business > Preference > Temporary)

- Regularly review the rule base to clean up outdated and contradictory rules

- Explicitly assign priorities to potentially conflicting rules

Challenge Two: Over-Teaching

Some users invest too much effort in the teaching stage, trying to teach all possible rules before starting any actual work. This often backfires. Solutions:

- Follow the “enough to proceed” principle — teach just enough knowledge for the current task, then start practicing

- Discover gaps through practice, then supplement teaching

- Remember: what the agent learns from practice is often more valuable than pure teaching

Challenge Three: Expectation Management

Expectations for the agent need to match its current learning stage. You can’t expect Stage Five performance during Stage One. Solutions:

- Maintain clear awareness of each stage’s capability range

- Accept the existence of a learning curve — early slowness enables later speed

- Focus on the trend of improvement rather than single-instance performance

Part Five: Auditability — How the Receipt System Ensures Delegation Transparency

The three core characteristics of delegation interaction are teachable, delegable, and auditable. The previous sections focused on teachability and delegability. This section focuses on auditability — the cornerstone of trust.

5.1 Why Auditability Is Critical

When you delegate work to an agent, you need to answer the following questions:

- What did it do?

- Why did it make this decision?

- Did its execution process comply with the rules?

- If something went wrong, which step caused the problem?

- How can the same problem be prevented from recurring?

If these questions can’t be answered, delegation can’t be established. No one will hand important work to a “black box” — where you can’t see the process, only the result; and if the result is wrong, you can’t identify the cause.

Auditability solves this trust problem. It ensures that everything has a verifiable record, transforming delegation from “blind trust” to “evidence-based trust.”

5.2 Design Philosophy of the Receipt System

DesireCore’s receipt system is the core implementation mechanism for auditability. Its design philosophy is: Every task execution generates a complete execution receipt, recording the entire process from reception to delivery.

A typical execution receipt contains the following information:

Task Metadata

- Task description: What the task is

- Delegation time: When the task was received

- Completion time: When delivery was completed

- Delegation level: Which level of delegation applies (fully guided/key checkpoint/result review/highly autonomous)

Execution Trail

- Step records: Detailed records of each execution step

- Decision rationale: At nodes requiring judgment, records of the agent’s reasoning

- Rule references: Which rules were referenced for each action

- Timeline: Execution time for each step

Exception Log

- Exception description: Exceptional circumstances encountered during execution

- Handling method: How they were handled (autonomous handling/consulting user)

- Resolution: Whether the exception was resolved

Deliverables

- Result content: The actual delivered result

- Self-assessment: The agent’s evaluation of its own delivery quality

- Improvement suggestions: Potential improvement areas identified by the agent

5.3 Three Levels of Traceability

The receipt system provides three levels of traceability:

Level One: Result Tracing

This is the most basic level — you can view the final execution result of any task. It answers the question “what was done.”

Level Two: Process Tracing

This is the intermediate level — you can trace back the complete execution process, including every step and every decision. It answers the question “how was it done.”

Level Three: Logic Tracing

This is the deepest level — you can trace the logical basis behind every decision, including which rules were referenced and why this approach was chosen over alternatives. It answers the question “why was it done this way.”

These three levels of traceability form a complete audit system. In daily work, you usually only need Level One tracing — just check the results. But when problems arise, you can dive into Level Two or even Level Three to precisely identify root causes.

5.4 The Cascading Effects of Auditability

Auditability isn’t just a “safety feature” — it triggers a series of positive cascading effects:

Effect One: Accelerated Trust Building

When you can review the agent’s execution process and decision rationale at any time, building trust becomes easier. Trust doesn’t come from “it says it’s reliable” but from “I’ve seen evidence that it’s reliable.”

Effect Two: Precise Problem Diagnosis

When a task goes wrong, you don’t need to guess at the cause. Through the receipt system, you can precisely identify which step the problem occurred at and which rule (or lack thereof) caused it. This makes correction and improvement efficient.

Effect Three: Quantified Assessment of Teaching Effectiveness

By analyzing receipt data, you can evaluate teaching effectiveness — determining which types of tasks the agent performs stably and which areas need reinforcement. This provides data-driven support for planning the next phase of teaching.

Effect Four: Knowledge Asset Accumulation

The decision rationales and rule references recorded in receipts are themselves valuable knowledge assets. They reflect not only the agent’s capability level but also your business rule system. Over time, this receipt data becomes an important component of enterprise knowledge management.

Effect Five: Compliance and Security Assurance

In industries with strict compliance requirements (such as finance, healthcare, and law), auditability isn’t just a “benefit” — it’s a “necessity.” The complete audit trail provided by the receipt system can satisfy regulatory requirements for AI decision-making process transparency.

5.5 Pause, Correct, Rollback: Control Rights During Delegation

Auditability is also reflected in the user’s control rights over the delegation process. In delegation interaction, the user always maintains three key controls:

Pause: At any moment, you can pause the agent’s execution. Regardless of what stage the task is at, a single “pause” command brings it to an immediate halt. After pausing, the agent reports its current progress and status, awaiting your further instructions.

Correct: When issues are discovered during review, you can make direct corrections. This includes correcting erroneous outputs, fixing inappropriate decision logic, and adjusting execution direction. Corrections don’t just change the current task’s execution — they also affect the agent’s understanding of related rules.

Rollback: If a step or decision is found to be wrong, you can roll back the execution state to a previous point. This is similar to “version rollback” in code — instead of starting from scratch, you return to the most recent correct state and re-execute from there.

These three controls ensure that delegation isn’t “letting go completely.” You step back but you’re never out of the loop; you trust, but it’s not blind trust. You always retain ultimate decision-making authority and control.

Part Six: Practical Cases — Delegation Interaction in Action

Theory needs practice for validation. Below, we present four concrete application scenarios demonstrating how delegation interaction works in real-world settings and the value it delivers.

6.1 Scenario One: Intelligent Assistant for Customer Service Teams

Background

An e-commerce platform’s customer service team needs to handle hundreds of customer emails daily, covering returns/exchanges, complaints, inquiries, after-sales support, and more. The team leader wants to improve processing efficiency through AI but is concerned about uncontrollable AI reply quality.

Application of Delegation Interaction

Stage One (Teach):

The team leader first conveys core rules to the agent:

- Company return/exchange policies (7-day no-questions-asked return, 30-day return for quality issues, etc.)

- Service standards for different customer tiers (VIP customers get priority handling, flexible returns)

- Reply tone and format standards

- Emails involving legal risk must be routed to human agents

- Customer privacy information must never be displayed in plain text in replies

Stage Two (Demonstrate):

Provides reply examples for various typical email types:

- Standard replies for simple return/exchange requests

- Soothing replies for emotionally charged customer complaints

- Professional replies for product inquiries

- Counterexamples of incorrect replies with their issues highlighted

Stage Three (Execute + Review):

The agent begins processing real customer emails, but every reply requires customer service staff review before being sent. During this stage, the team leader discovers the agent occasionally makes errors in refund amount calculations, so supplementary rules and examples are added with more detail.

Stages Four and Five:

After two weeks of refinement, the agent’s reply quality for most routine emails consistently meets standards. The team switches to only manually reviewing complex emails, while the agent handles simple emails autonomously.

Results

- Average email processing time reduced from 15 minutes to 3 minutes

- Customer satisfaction maintained (quality monitored through the receipt system)

- Customer service team freed to focus on complex cases

- Every email sent has a complete receipt record, traceable at any time

6.2 Scenario Two: Writing Collaboration for Content Teams

Background

A tech media company’s content team needs to produce multiple industry analysis articles per week. The editor-in-chief has clear writing style and quality standards, but as the team expands, new writers’ article quality varies widely. The editor hopes to use AI to help new writers adapt more quickly to the team’s writing standards.

Application of Delegation Interaction

Teaching Stage:

The editor conveys writing rules to the agent:

- Article structure: Lead with insights, support with evidence, back up with data

- Language style: Professional but not academic, insightful but not extreme

- Citation standards: All data must include sources, no data older than one year

- Prohibited: No absolutist language (“the best,” “the only,” “inevitably”), no unsupported predictions

- Headline standards: Concise and impactful, no more than 15 words, no clickbait

Demonstration Stage:

Award-winning articles and rejected articles are provided as positive and negative examples, with annotations highlighting each article’s strengths and shortcomings.

Collaboration Model:

After new writers complete their first draft, it’s submitted to the agent for a first round of review. The agent provides revision suggestions based on learned rules, with rule references for each suggestion. Writers revise and then submit to the editor-in-chief for final review.

Results

- Time for new writers to produce articles meeting standards reduced from an average of 2 months to 3 weeks

- Editor-in-chief’s final review workload reduced by approximately 60%

- Writing standards systematically consolidated through the agent’s continuous feedback

- Every review process is fully documented, becoming part of the team’s knowledge base

6.3 Scenario Three: Task Coordination in Project Management

Background

A project manager for a mid-sized software development team needs to simultaneously manage progress across multiple projects, coordinate resource allocation, track risks, and handle emergencies. The project manager wants a reliable assistant to share the burden of daily management work.

Application of Delegation Interaction

Teaching Stage:

The project manager teaches the agent core project management rules:

- Format and key points for daily standup meeting notes

- Project status assessment standards (green/yellow/red light)

- Risk escalation conditions and procedures

- Resource conflict handling priorities

- Communication preferences for various stakeholders

Progressive Delegation:

Starting with simple meeting note organization, gradually expanding to project progress tracking, risk log maintenance, weekly report generation, and more. Each piece of work goes through the complete cycle of teaching—practice—review—confirmation.

Mature Phase Operation:

The agent handles most of the administrative aspects of daily project management, freeing the project manager to invest more energy in key decisions and team management. When the agent encounters situations requiring judgment (such as whether a project’s delay risk needs escalation), it attaches its judgment and rationale in the receipt for the project manager’s final decision.

Results

- Project manager’s time spent on daily administrative work reduced by approximately 70%

- Project report accuracy and timeliness significantly improved

- Risk warning response time changed from “discovered after meetings” to “real-time monitoring”

- All management actions have complete receipt records, satisfying the company’s project management process audit requirements

6.4 Scenario Four: Personal Productivity Enhancement

Background

A startup CEO needs to handle large volumes of emails, meeting scheduling, business communications, and document processing every day. He wants to use AI to reduce the burden of routine tasks so he can focus more on strategic thinking and key decisions.

Application of Delegation Interaction

Teaching Stage:

The CEO gradually conveys his work habits and preferences to the agent:

- Email classification and priority rules (which need immediate attention, which can wait)

- Scheduling preferences (mornings reserved for deep work, afternoons for meetings and communication)

- Frequently used document templates and formats

- Relationship status and communication considerations for various business partners

- Decision frameworks for specific scenarios

Progressive Delegation:

Starting with email screening, gradually expanding to calendar management, meeting material preparation, business communication drafting, and more. Each new task delegation goes through a complete teaching and verification process.

Highly Autonomous Phase:

The agent can independently handle email screening and classification, schedule optimization suggestions, routine business replies, meeting notes, and action item tracking. The CEO only needs to intervene at key decision points.

Results

- 2-3 hours of routine work time saved daily

- Response speed for important emails improved by 50%

- Schedule arrangement rationality improved (reduced fragmented time)

- All business communications fully documented for easy retrospection and review

Conclusion: The Impact of Delegation Interaction on the Future of Work

From the Tool Paradigm to the Partner Paradigm

Looking back at the history of AI technology development, we’ve progressed from “automation tools” to “intelligent assistants.” But even so-called “intelligent assistants” still perpetuate the tool paradigm in their interaction model — you ask, it answers; you stop, it stops. It has no memory, no growth, no initiative.

Delegation interaction represents the next paradigm leap — from the tool paradigm to the partner paradigm. In the partner paradigm, AI is no longer a passive responder but a collaborative partner that can be cultivated, can grow, can be trusted, and can be delegated to.

The significance of this transformation extends far beyond the technical level. It changes not just “what AI can do” but “how humans and AI work together.”

A New Vessel for Knowledge

Under the delegation interaction model, the rules, preferences, and experiences you teach the agent are essentially the digital crystallization of your work knowledge.

Traditionally, work knowledge resides in employees’ minds, transferred through verbal instruction, documentation, and practical accumulation. This transfer method is inefficient, prone to loss, and difficult to standardize.

Delegation interaction offers a new possibility — through the “Teach” and “Demonstrate” primitives, work knowledge is systematically transferred to the agent, and through the “Execute” and “Review” primitives, it’s continuously validated and refined in practice. The agent becomes a living vessel for organizational knowledge — not just storing knowledge but applying and testing it in real work.

The Evolution of Human Roles

Delegation interaction won’t replace humans — it will transform human roles. As more routine execution work gets delegated to agents, human roles will evolve in the following directions:

From executor to coach: Your value is no longer measured by how much work you personally do but by how effectively you can train agents. A good “mentor” enables agents to become independently capable faster; a poor “mentor” stays stuck in the hand-holding stage.

From operator to reviewer: You no longer need to personally handle everything, but you need the ability to judge the quality of the agent’s output. This requires deeper understanding of work standards, beyond just execution-level proficiency.

From detail manager to strategic thinker: When routine details are handled by agents, you can invest more time and energy in areas requiring uniquely human capabilities — creative thinking, strategic judgment, relationship management, and more.

The Technical Foundation of Trust

The core of delegation interaction isn’t just a technological breakthrough — it’s a trust mechanism breakthrough.

In traditional AI, trust is a subjective feeling — you feel AI is reliable, so it’s reliable; you feel it isn’t, and nothing can change your mind, because you have no evidence either way.

Delegation interaction, through auditability, the receipt system, and end-to-end traceability capabilities, builds trust on an objective evidence foundation. You don’t need to “believe” the agent is reliable — you can verify that it’s reliable. Every successful task delivery, every complete execution receipt, every precise rule reference serves as an objective basis for trust.

This evidence-based trust is more solid and more scalable than feeling-based trust.

Looking Ahead: A New Era of Collaboration

The emergence of delegation interaction marks a new era in human-AI collaboration. In this era:

-

AI is no longer a disposable tool but a digital partner worthy of continuous investment that yields continuous returns. Every minute you spend on teaching is an investment in future efficiency gains.

-

Work knowledge no longer exists only in people’s minds but can be systematically transferred and preserved. Even when team members leave, the work knowledge they’ve accumulated can continue to deliver value through agents.

-

Human-machine collaboration is no longer the one-way relationship of “humans using machines” but the bidirectional relationship of “mentor and apprentice co-creating.” Humans teach AI, AI helps humans; the agent’s feedback also helps humans better understand and optimize their own workflows.

-

Trust is no longer a psychological problem but an engineering problem that can be solved with data and evidence.

Delegation interaction isn’t the destination — it’s a starting point. Through the Six Primitives Framework and robust auditability mechanisms, DesireCore has taken the critical step from the “tool era” to the “partner era.” And this step is redefining how we collaborate with AI — from “using tools” to “mentoring apprentices,” from “one-time consumption” to “continuous accumulation,” from “blind trust” to “evidence-based verification.”

The future is here. Are you ready to train your first “digital apprentice”?